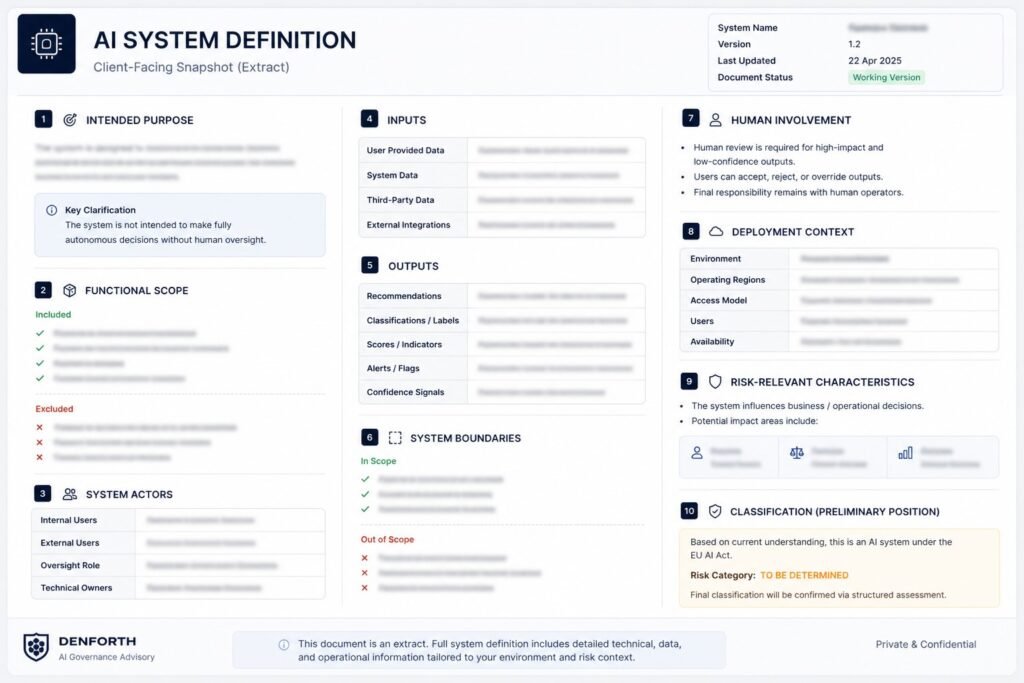

- System purpose and scope

- Inputs, outputs, and boundaries

- Human review and control points

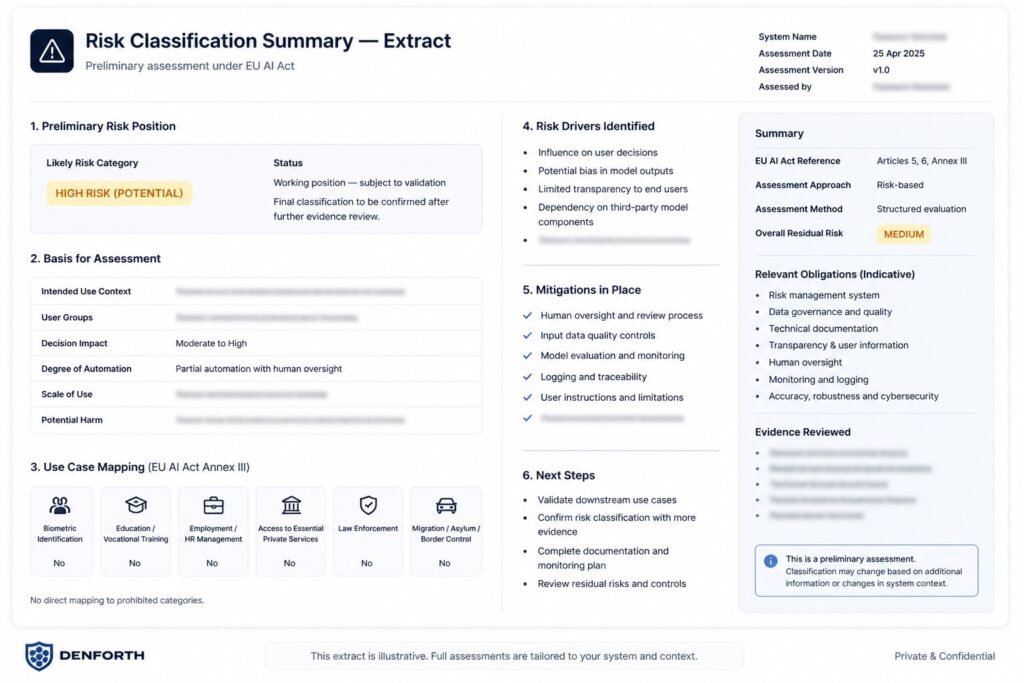

- Risk rationale

- Key exposure signals

- Review and mitigation priorities

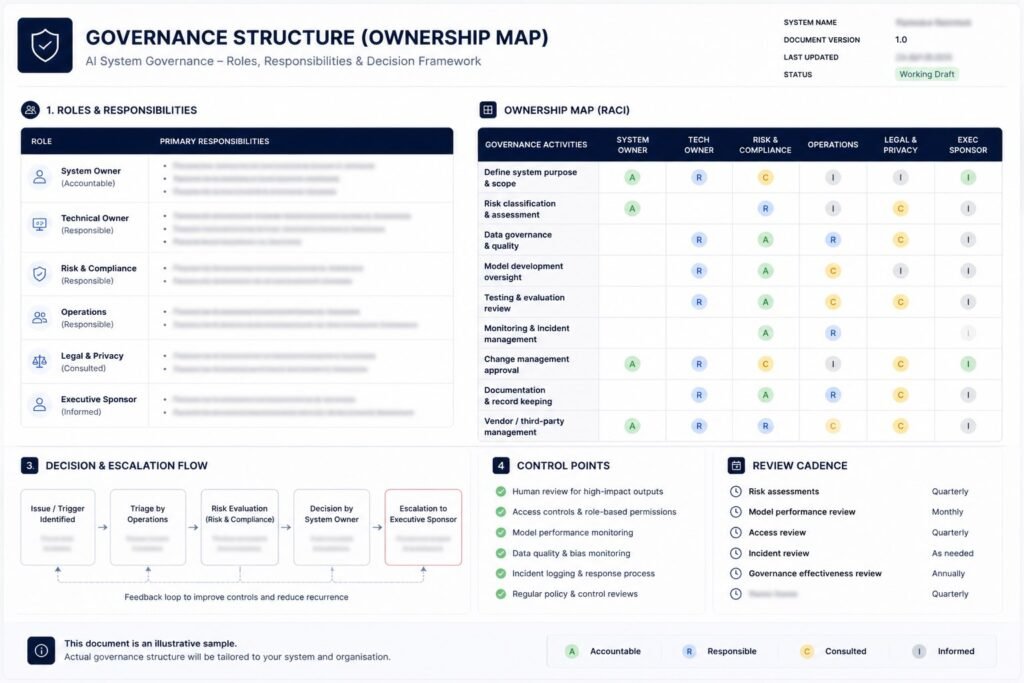

- Ownership roles

- Escalation logic

- Review cadence

No commitment. Start with structured input or talk first.