The Hidden Risk in AI Compliance: It’s Not the Model – It’s the Supply Chain

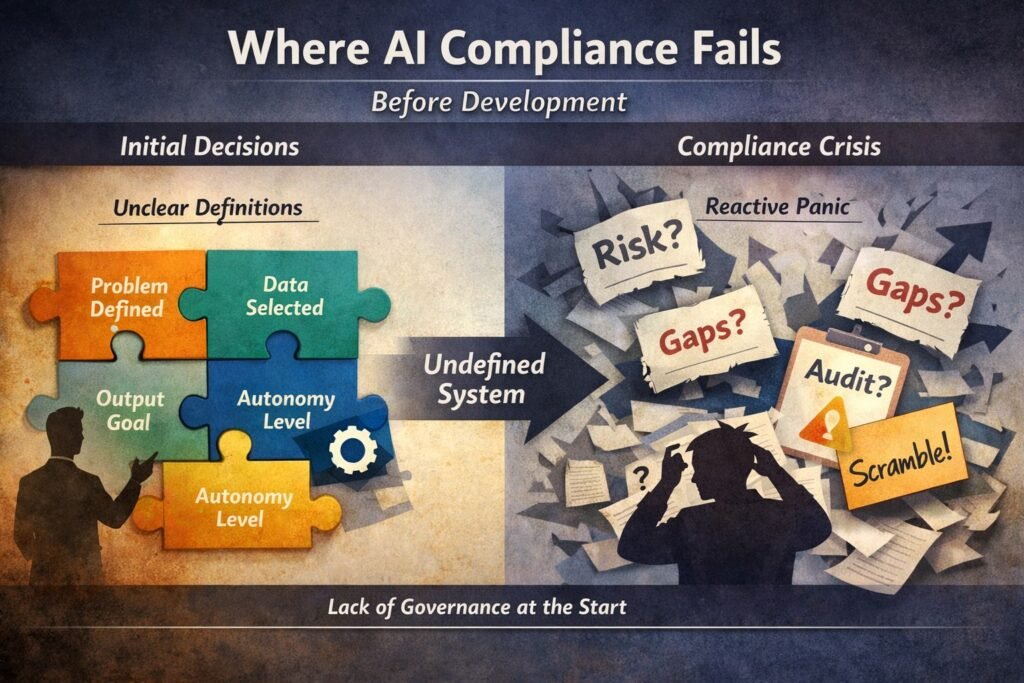

Most teams think AI compliance is about what they build. It’s not. It’s about everything they depend on. The model is only the visible layer.The real exposure sits underneath — in data sources, third-party APIs, fine-tuning pipelines, and tooling choices that no one fully documents. And that’s where things start to break. The uncomfortable reality […]

The Hidden Risk in AI Compliance: It’s Not the Model – It’s the Supply Chain Read More »