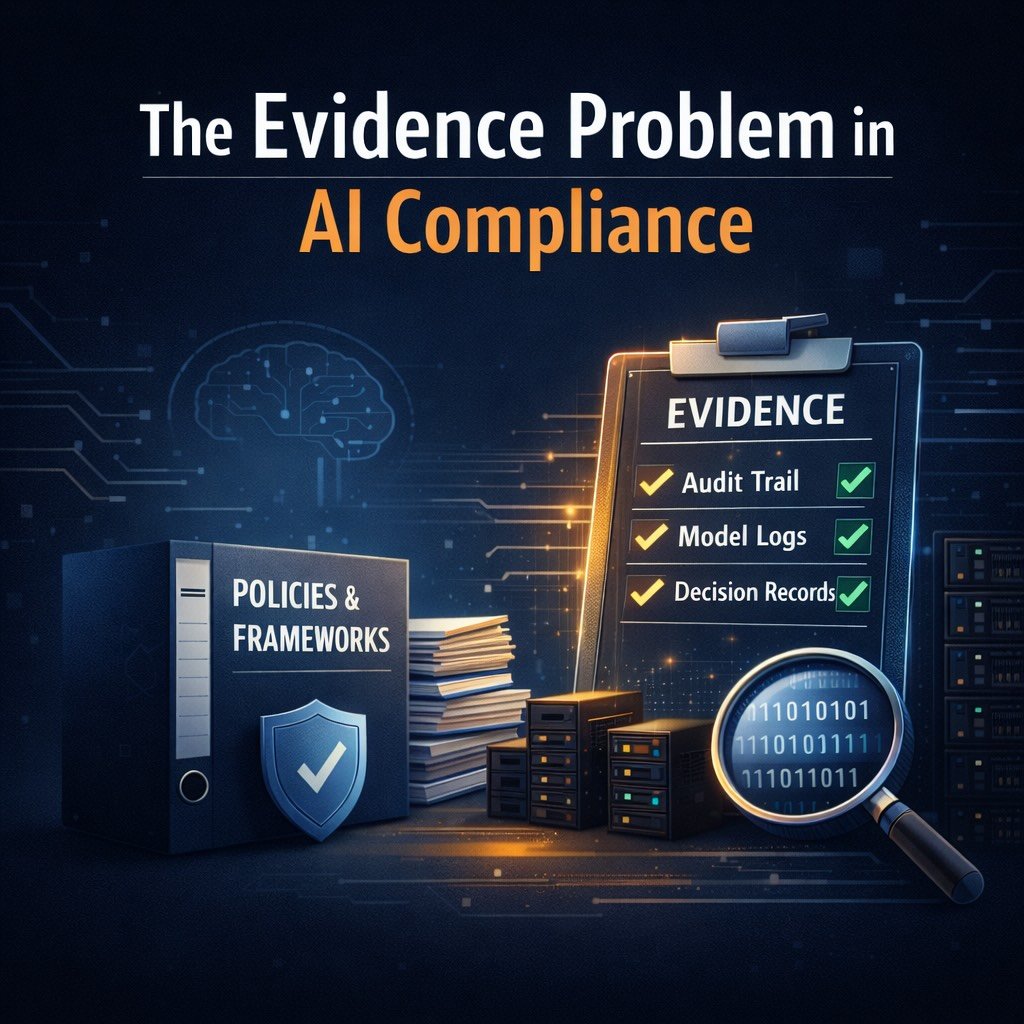

Why AI Compliance Fails Before It Starts: The Evidence Problem

Most AI compliance efforts don’t fail during audits. They fail long before—quietly, structurally, and almost invisibly. The failure begins at the moment a company confuses documentation with evidence. The illusion of preparedness In the early stages of AI governance, most teams move in a predictable way. They assemble policies.They define internal principles.They produce frameworks that look […]

Why AI Compliance Fails Before It Starts: The Evidence Problem Read More »