Most discussions about EU AI Act compliance begin with obligations. Risk management, documentation, conformity assessment. For many teams, this immediately feels overwhelming.

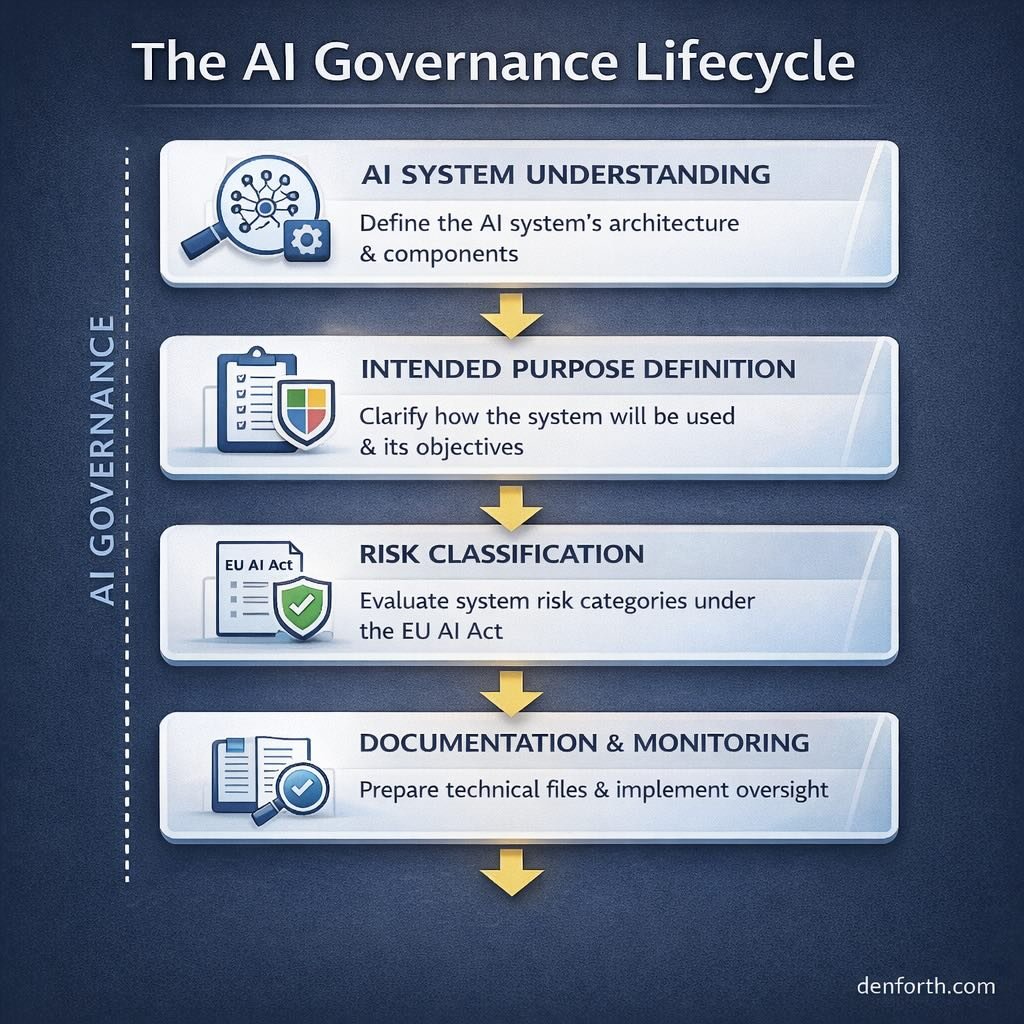

In practice, compliance does not start with obligations. It starts with visibility.

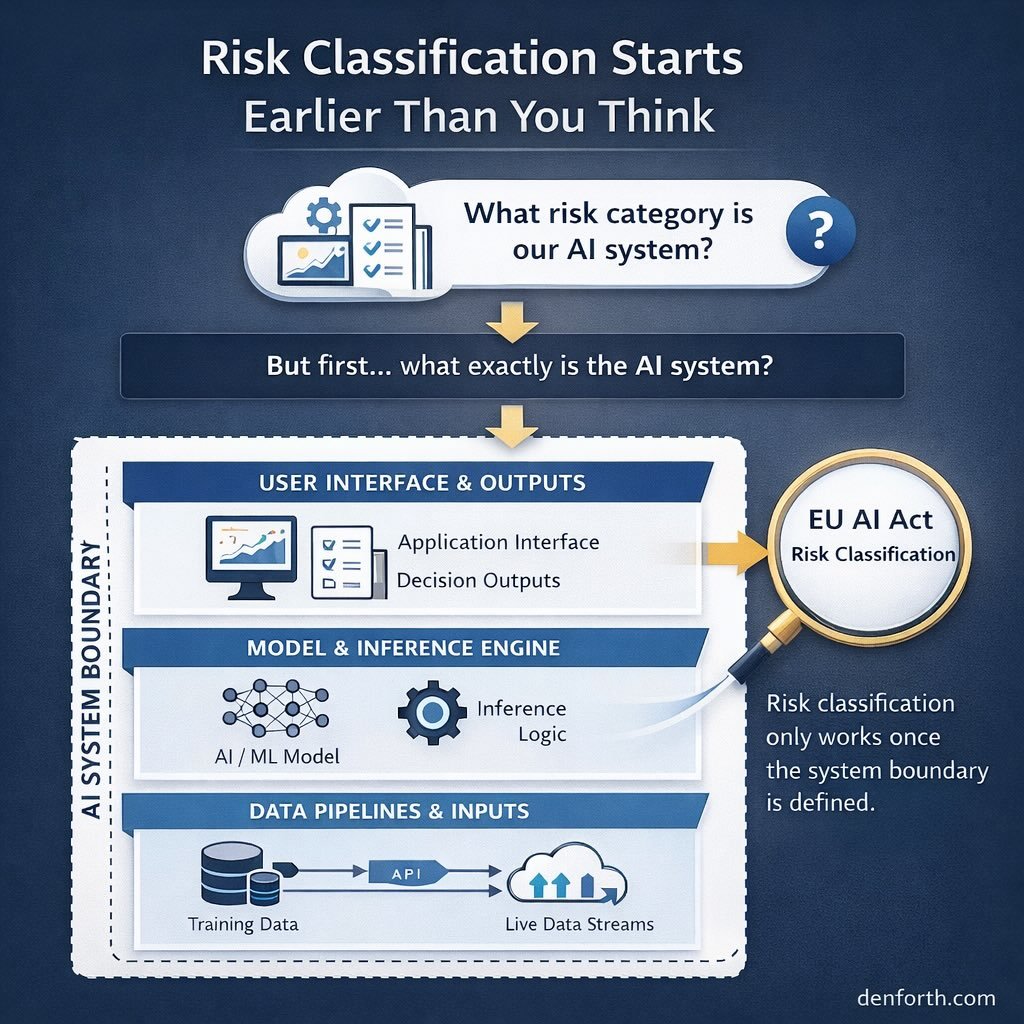

Before a team can classify risk, map roles, or plan timelines, it needs a clear picture of one thing: what AI systems actually exist inside the organisation.

This is where an AI inventory comes in.

An AI inventory is not a regulatory requirement by itself. The EU AI Act does not prescribe a specific format or template for it. Yet without an inventory, most compliance decisions become guesswork.

Teams often underestimate how many AI systems they use. Beyond core products, AI appears in internal tools, third-party services, automated decision support, analytics pipelines, and customer-facing features. Some systems are obvious. Others are embedded quietly and rarely revisited.

An inventory brings these systems into view.

The purpose of an AI inventory is not documentation for regulators. It is internal clarity.

A well-maintained inventory allows teams to answer basic but essential questions. Which systems perform inference? Which influence decisions about people? Which are built internally, and which are sourced externally? Which are experimental, and which are already in production?

Without this baseline, role mapping and risk classification become abstract exercises.

From a practical perspective, an AI inventory usually captures a small set of core attributes for each system:

- where the system is used and for what purpose

- whether it qualifies as an AI system under the Act

- whether the organisation acts as provider or deployer

- whether the use context could trigger high-risk classification

This level of detail is usually sufficient at an early stage. Anything more tends to slow teams down without improving decision quality.

One common mistake is treating the inventory as a one-time exercise. In reality, AI inventories are living artefacts. Systems evolve. Use cases change. Models are retrained or repurposed.

Teams that revisit their inventory periodically tend to spot regulatory implications early. Teams that treat it as a static list often discover issues late, when changes are harder to manage.

Another frequent issue is confusing an AI inventory with a technical asset register. The two overlap, but they are not the same.

The inventory is not about infrastructure or code ownership. It is about decision impact and regulatory relevance. A simple model in the wrong context matters more than a complex model used safely.

A clear inventory also reduces unnecessary work. Teams often rush into documentation or controls for systems that ultimately fall outside the Act’s scope or high-risk categories. Inventory-first approaches prevent this by narrowing attention to what actually matters.

AI inventory work is rarely visible from the outside. There are no public announcements or badges attached to it. Yet it is one of the most effective ways to de-risk EU AI Act timelines.

Once systems are visible, everything else becomes easier to sequence.

Compliance does not begin with paperwork. It begins with knowing what you are dealing with.