A practical governance issue most AI startups discover only when customers begin asking questions.

Many AI SaaS companies assume that EU AI Act compliance will mainly involve reading regulatory text and mapping their product to the correct category. In practice, the difficulty appears much earlier.

When founders or product leaders are asked about the risk classification of their AI system, the conversation often slows down. Not because the regulation is unclear, but because the system itself has never been formally defined.

Before any meaningful discussion about EU AI Act classification can happen, a company must answer a simpler question:

What exactly is the AI system?

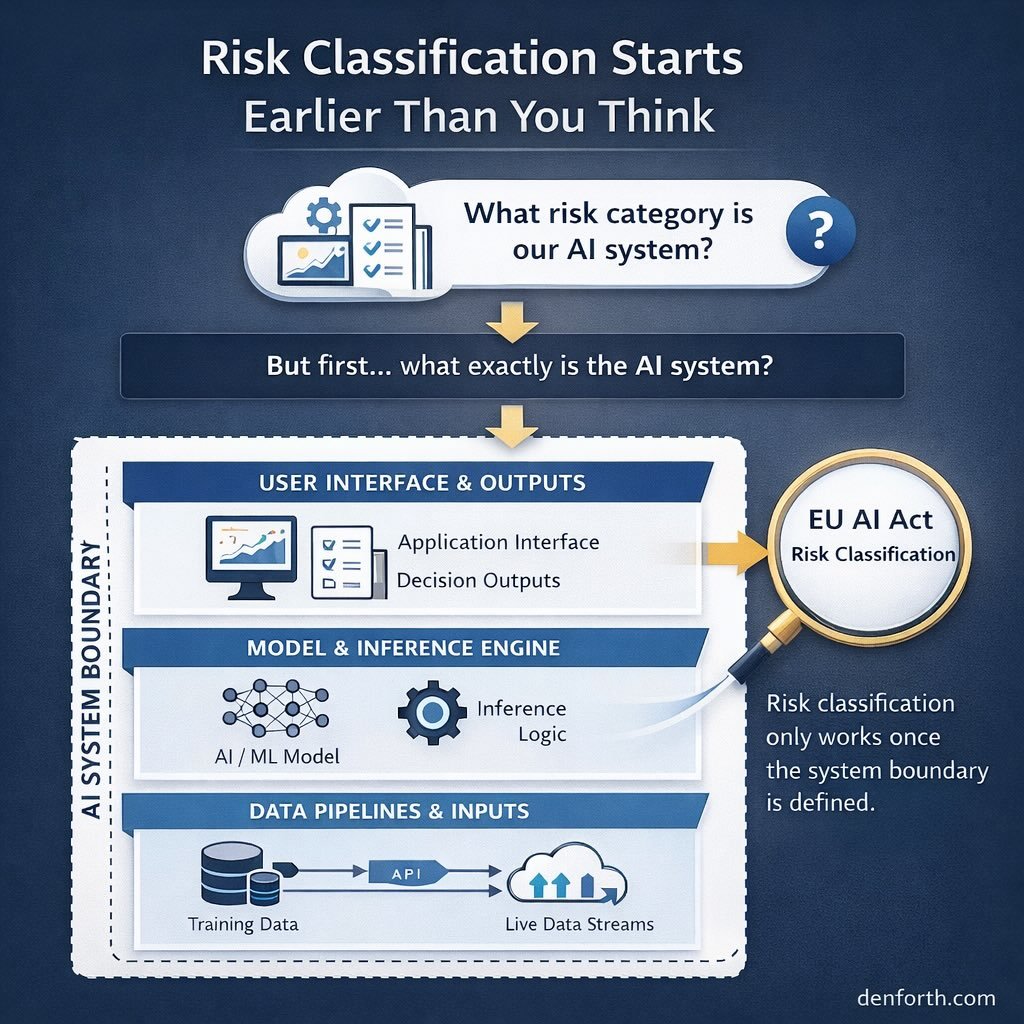

For many AI-enabled SaaS products, this is less obvious than it sounds. Modern AI products rarely consist of a single model running independently. They are usually made up of several interconnected components. Data pipelines feed models. Models generate outputs. Applications present those outputs to users. Monitoring systems track performance and behavior over time. In day-to-day development, teams focus on improving accuracy, releasing features, and scaling infrastructure. Very few teams stop to define the formal boundary of the AI system itself.

However, governance frameworks such as the EU AI Act require exactly this level of clarity. Without a clear system definition, risk classification becomes difficult. Teams start asking questions that do not have obvious answers.

Which components belong to the AI system?

What decisions does the system influence?

Where does automated inference occur?

What parts of the product must be monitored?

Engineering teams often think of the system in terms of the model. Product teams may define it around the user feature. Legal teams may define it around the decision impact. Each perspective makes sense in isolation. But governance requires a single, defensible explanation of the system as a whole. When that explanation does not exist, several problems appear quickly.

Internal conversations about risk classification stall. Documentation becomes inconsistent. Responsibility for monitoring and oversight becomes unclear. More importantly, when enterprise customers begin asking governance questions, the company struggles to provide clear answers. This is why the first real step in AI governance work is usually not regulatory interpretation.

It is system mapping.

System mapping means describing how the AI system actually operates. It identifies the components that form the system, the data that flows through it, the outputs that reach users, and the teams responsible for maintaining each part.

Once this structure becomes visible, governance discussions become far easier. Risk classification can be reasoned about more clearly. Documentation can be structured in a coherent way. Monitoring responsibilities become easier to assign. In other words, governance stops being abstract.

Risk classification under the EU AI Act is often treated as a legal exercise. But in practice, it functions as something else. It forces organizations to articulate how their AI system actually works.

For many AI SaaS companies, this moment becomes the beginning of real governance maturity. Not because regulators demand it, but because the company finally develops a clear internal understanding of its own AI system architecture. Before documentation frameworks or compliance programs are introduced, this clarity is essential.

AI governance begins with defining the system that is being governed.