When companies first encounter the EU AI Act, the conversation often begins with documentation.

Teams ask questions like:

“What documentation do we need?”

“Do we have to prepare technical files?”

These questions assume that AI governance begins with writing documents.

In reality, documentation usually comes much later in the process.

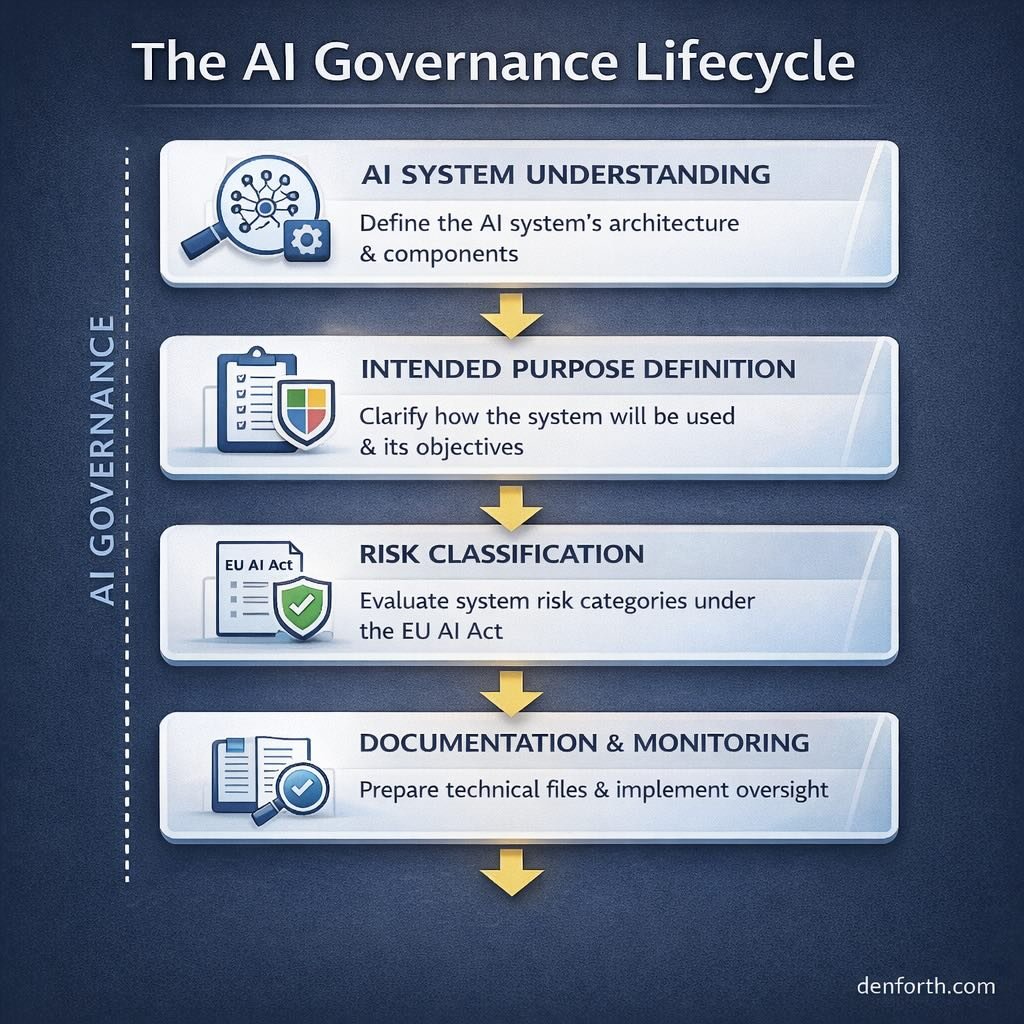

AI governance follows a lifecycle that starts with understanding how the AI system itself operates.

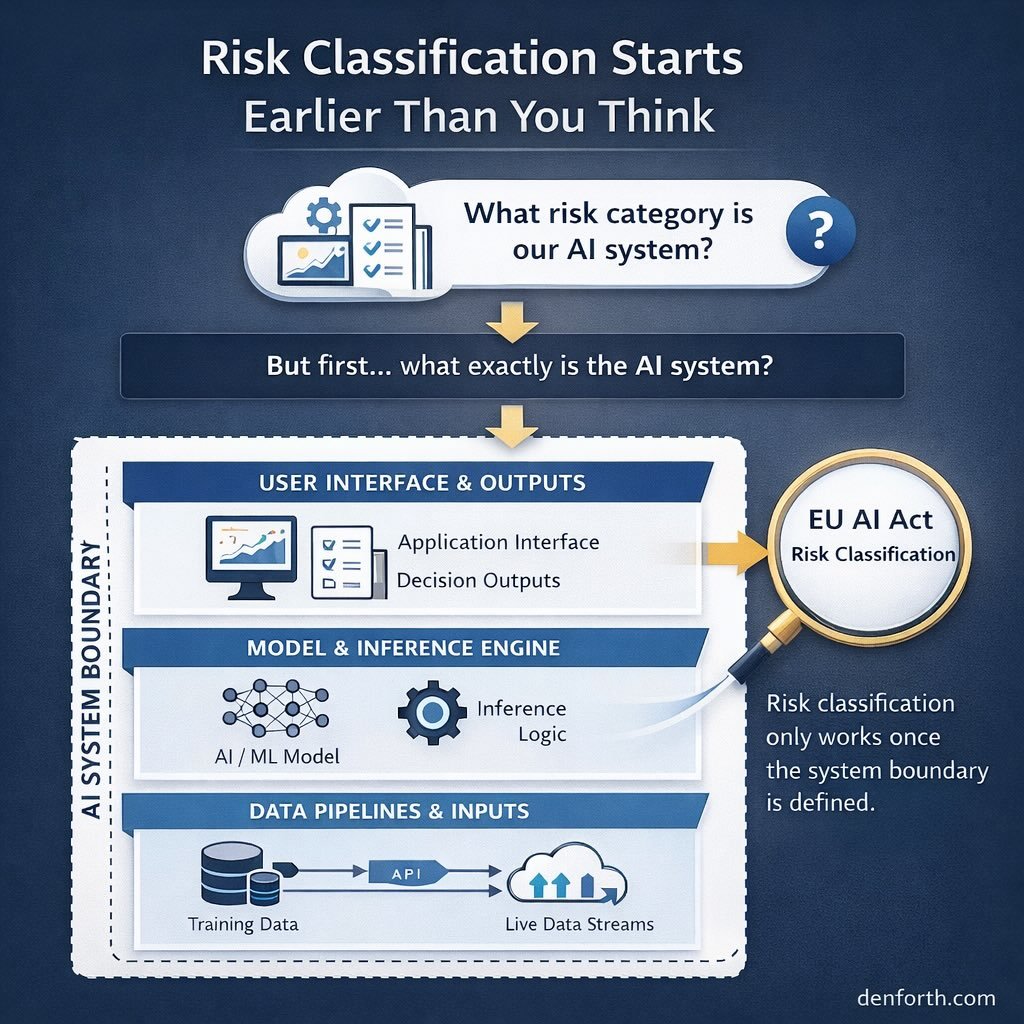

Before any compliance documentation can be created, organizations must first understand the structure of the system they are deploying. In modern SaaS products, AI is rarely a single isolated component. It is typically embedded in a larger architecture involving data pipelines, model inference layers, APIs, user interfaces, and downstream decision processes.

The first step in governance therefore focuses on system understanding. Teams map the AI system’s boundary and identify how data enters the system, how models generate outputs, and how those outputs affect users or automated decisions.

Once the system boundary is clear, the next step is defining the intended purpose of the system. This includes clarifying what the system is designed to do, how it will be used, and what types of decisions may rely on its outputs.

Only after these foundations are established can the organization perform risk classification. At this stage the system can be evaluated against the categories defined in the EU AI Act, which determine whether it falls into prohibited, high-risk, limited-risk, or minimal-risk classifications.

Response: AI Compliance Playboo…

If the system triggers regulatory obligations, teams then construct the documentation layer that demonstrates how the system was designed, tested, and monitored.

Finally, governance becomes operational through ongoing monitoring and lifecycle management, where organizations track model performance, operational risks, and system changes after deployment.

Seen this way, AI governance is not a single compliance task. It is a structured lifecycle connecting product architecture, regulatory evaluation, documentation, and operational oversight.

Organizations that understand this lifecycle early avoid one of the most common mistakes in AI compliance: attempting to produce documentation before understanding the system it is supposed to describe.