Most teams think AI compliance starts when the system is built.

It doesn’t.

By the time you are thinking about documentation, testing, or risk classification, most of the important decisions have already been made — implicitly, and often without governance.

This is where compliance actually begins.

The EU AI Act defines an AI system not as a finished product, but as something that evolves across design, deployment, and use.

It explicitly recognizes that AI systems:

- derive outputs such as predictions, content, or decisions

- operate with varying levels of autonomy

- influence both digital and physical environments

This means compliance is not tied to a moment.

It is tied to a structure.

The real starting point is not the model — it is the decision

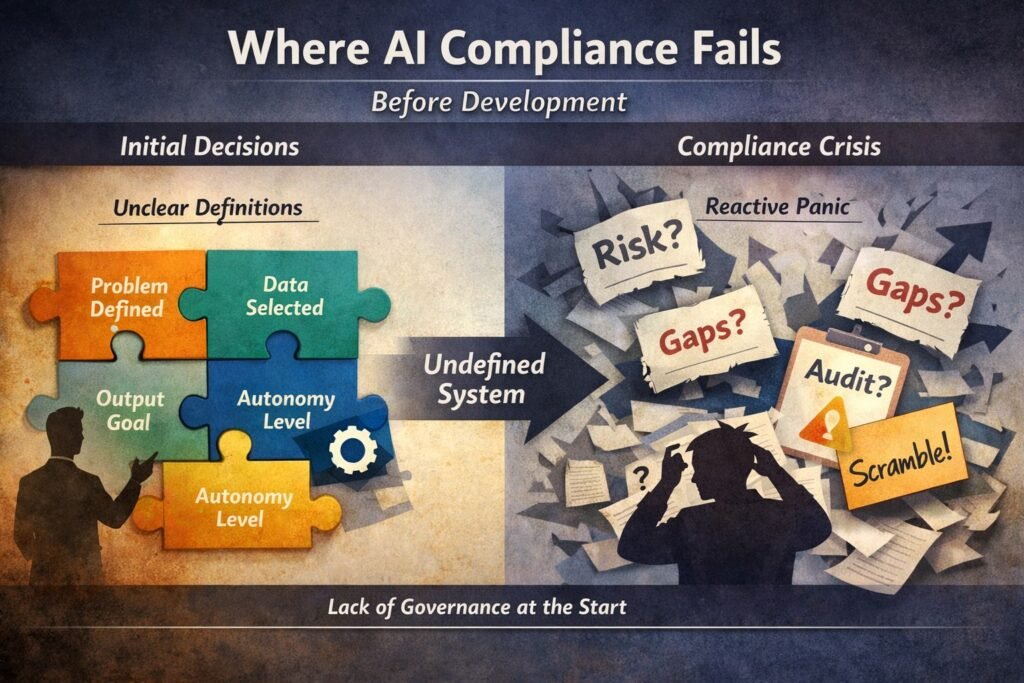

Before any model is trained, teams already decide:

- what problem is being solved

- what type of output is acceptable

- what data will be used or excluded

- what level of autonomy is tolerated

These decisions define the system’s risk surface long before any technical implementation.

And yet, they are rarely documented.

This is where most compliance failures originate

When governance is missing at the decision stage:

- risk classification becomes reactive

- documentation becomes reconstruction

- accountability becomes unclear

Instead of explaining a system, teams end up justifying it.

The regulatory logic is consistent

The AI Act follows a risk-based approach, where obligations scale with the potential impact of the system

But this approach assumes something critical:

👉 that the system boundaries and intended purpose are already clearly defined

Without that, risk classification becomes guesswork.

The hidden dependency: system definition

Before you can classify risk, you need to answer:

- What exactly is the AI system?

- What decisions does it influence?

- Who is affected by its outputs?

If these answers are unstable, everything downstream becomes unstable:

- classification

- documentation

- monitoring

- audit readiness

Why this matters for real companies

In practice, this shows up as:

- multiple teams describing the same system differently

- unclear ownership between product, data, and legal

- last-minute compliance efforts before procurement or audit

At that point, the cost is not just compliance effort.

It is loss of control over the system narrative.

The shift: from compliance tasks to governance structure

Teams that handle this well do something different.

They do not start with:

“What documents do we need?”

They start with:

“What exactly are we building, and how does it behave?”

From there, everything else becomes structured:

- risk classification becomes deterministic

- documentation becomes descriptive, not defensive

- governance becomes embedded, not external

Final thought

AI compliance does not begin with regulation.

It begins with definition.

And if that definition is unclear, every compliance step that follows will carry that uncertainty forward.