Most teams don’t fail at compliance because they lack effort.

They fail because they start in the wrong place.

Under the EU AI Act, that mistake is almost always the same:

They begin building documentation, policies, and controls

before they have correctly classified their system.

And once that happens, everything that follows is misaligned.

The illusion of progress

When a team starts working on:

- policies

- templates

- internal guidelines

- risk registers

- documentation packs

…it feels like progress.

There is movement. There are artifacts. There are deliverables.

But none of it answers the only question that matters at the beginning:

What are we actually building in the eyes of the regulation?

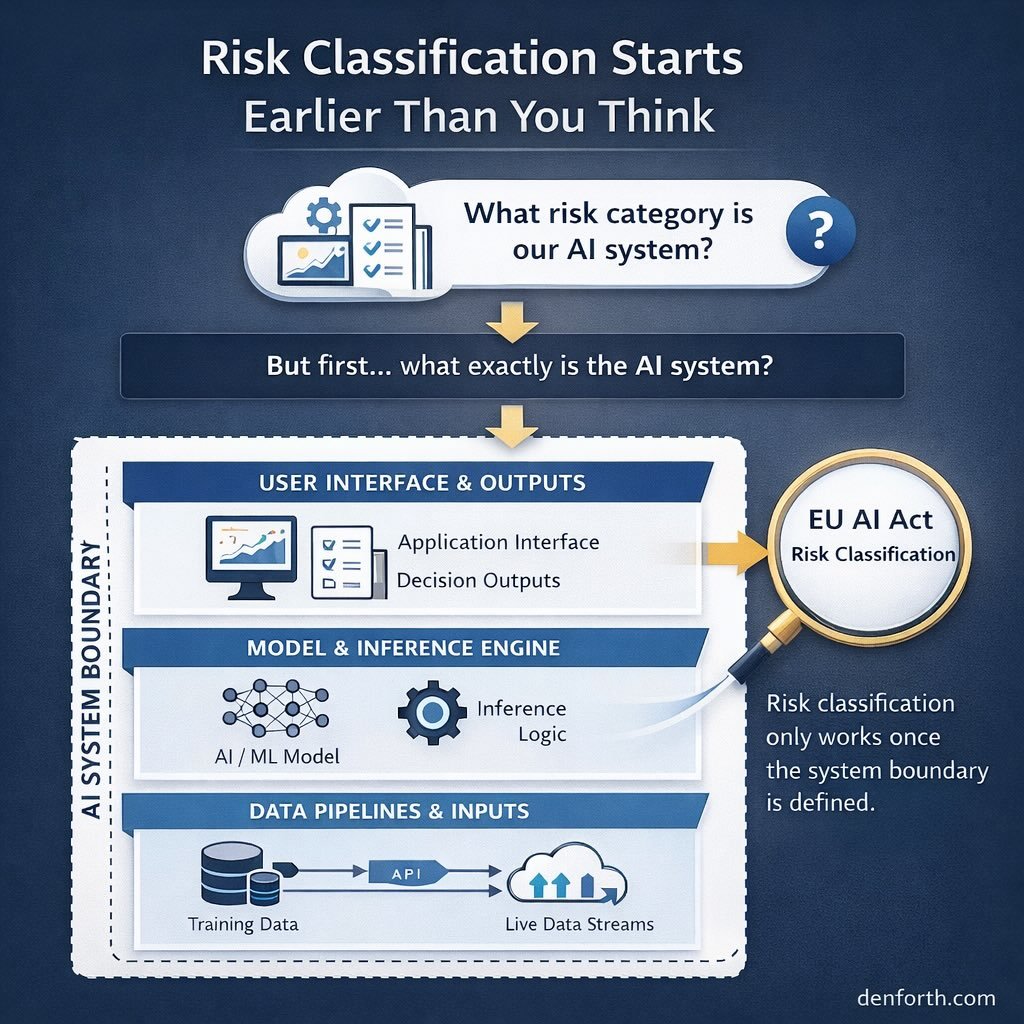

The real starting point: classification

The EU AI Act is not a documentation framework.

It is a classification-driven system.

Before anything else, you need to determine:

- whether your system qualifies as AI

- whether it falls into a prohibited category

- whether it is classified as high-risk

- or whether it is subject only to limited transparency obligations

This step is not administrative.

It is structural.

Because classification determines:

- which obligations apply

- how strict they are

- how your system must be designed

- what documentation is required

- what monitoring is expected post-deployment

Why teams get this wrong

There are three consistent patterns:

1. They treat compliance as documentation

Teams assume compliance means:

“We need policies and a technical file.”

But those are outputs.

Without correct classification, they are guesses.

2. They rely on generic interpretations

Instead of analysing their specific use case, teams:

- copy interpretations from articles

- rely on simplified summaries

- assume their system is “probably low-risk”

This leads to under-scoping or over-building.

Both are expensive.

3. They separate product and compliance thinking

Classification is not a legal exercise alone.

It requires:

- understanding the system architecture

- intended use

- deployment context

- user interaction

- downstream effects

When product and compliance are disconnected, classification becomes superficial.

The consequence: misaligned systems

If classification is wrong, everything downstream breaks:

- controls don’t match actual obligations

- documentation doesn’t satisfy audit expectations

- monitoring is incomplete

- governance structures are either excessive or insufficient

And eventually:

You don’t have a compliant system —

you have a collection of documents.

What correct sequencing looks like

A functional approach to EU AI Act compliance is simple in structure, but difficult in execution:

- Define the use case clearly

- Determine whether it qualifies as an AI system

- Classify it under the regulation

- Map the applicable obligations

- Build the compliance system accordingly

Only after this should documentation begin.

Why this matters now

The cost of getting this wrong is not just regulatory.

It affects:

- procurement readiness

- enterprise sales cycles

- investor confidence

- internal clarity

Increasingly, counterparties are not asking:

“Do you have documentation?”

They are asking:

“Do you understand your obligations?”

And that starts with classification.

Final thought

Most teams don’t fail because compliance is too complex.

They fail because they try to simplify it too early.

Under the EU AI Act, there is no shortcut around classification.

If you get that step wrong, everything after it becomes a correction exercise.

If you get it right, the rest becomes structured.